AI Accessibility Extension

Chrome extension that adds low-latency narration to simulation-heavy learning tools.

Visual-first STEM labs were excluding blind students from real-time learning.

Role

Lead Developer

Timeframe

Jan 2024 — Present

Outcome

LLM-assisted extension for real-time simulation narration and accessibility support

Chapter 01

Hook

Visual-first STEM labs were excluding blind students from real-time learning.

Chapter 02

Context

A Chrome extension that makes PhET and Smart Sparrow STEM simulations accessible to blind and visually impaired students. The system captures simulation state changes in real-time, sends them to an LLM for pedagogical narration generation, and delivers text-to-speech output with sub-200ms latency.

Chapter 03

Role

Role: Lead Developer

Timeframe: Jan 2024 — Present

Primary decision: Built adapter-based state capture for SimCAPI, DOM, and Unity WebGL.

Execution decision: Streamed LLM narration through Lambda to keep interaction latency low.

Chapter 04

Process

Chrome extension hooks into multiple state sources depending on simulation type: SimCAPI event bridge for compatible simulations, DOM mutation observers for legacy content, or Unity WebGL message channel for WebGL-based labs. State changes normalize to a unified schema, then pass to an LLM (via AWS Lambda) that generates pedagogically-aware narration. Web Speech API delivers TTS with sub-200ms latency.

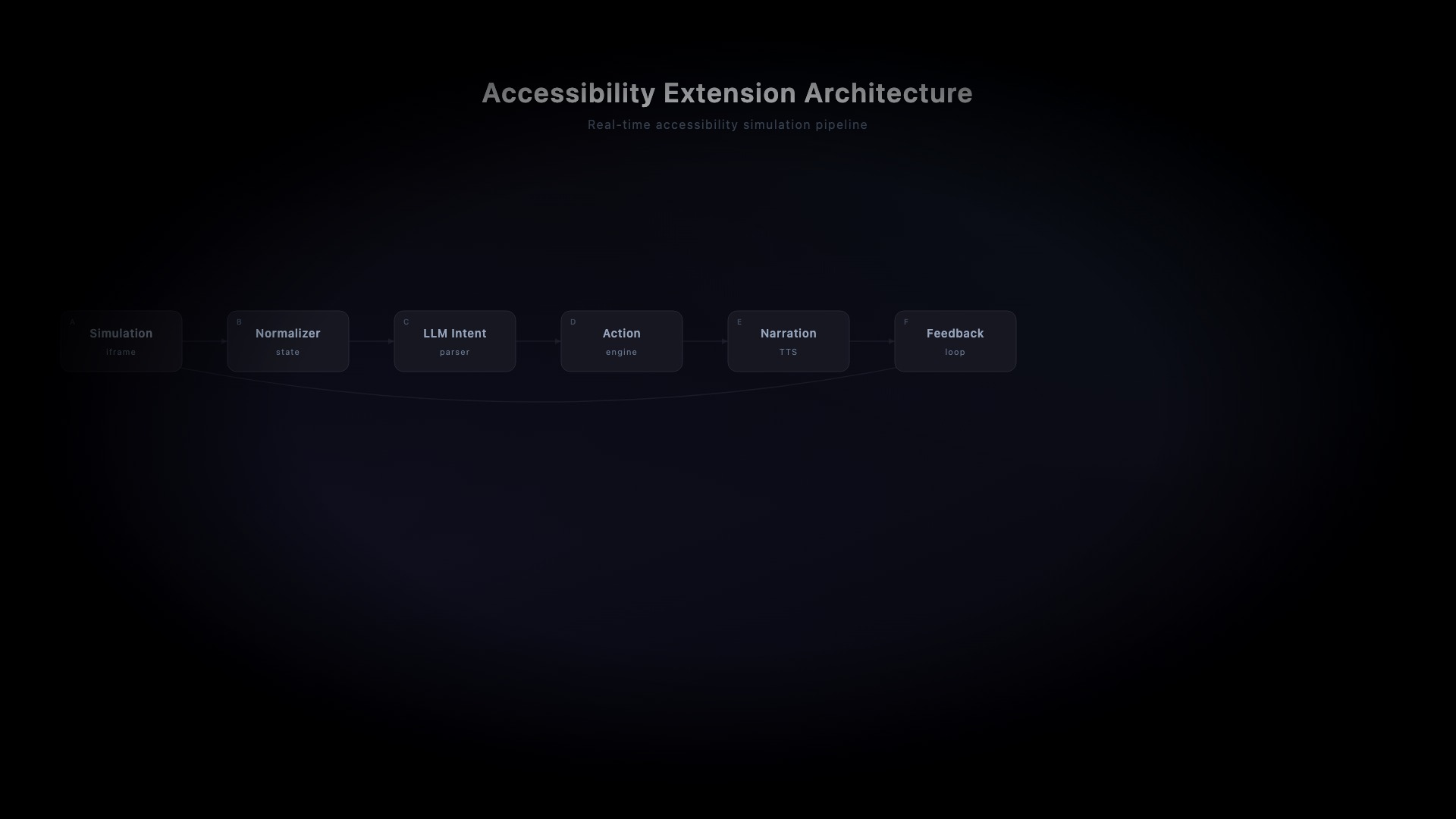

Browser Extension (Content Scripts + Background Service Worker) → State Adapters (SimCAPI/DOM/Unity) → Normalized Event Stream → AWS Lambda (LLM Prompting) → TTS Service → Audio Output. PostgreSQL logs usage analytics. CloudWatch monitors latency and error rates.

Content scripts inject state listeners into simulation iframes using SimCAPI where available, falling back to MutationObserver for DOM-heavy simulations. Background service worker manages LLM API calls with retry logic and rate limiting. Response streaming allows TTS to begin before full narration is generated.

Chapter 05

Obstacles

Cross-origin iframe access required creative use of SimCAPI's postMessage bridge. Unity WebGL simulations needed custom protobuf parsing. LLM latency was reduced through prompt caching and response streaming. TTS voice quality tradeoffs—browser-native Web Speech is fast but less natural than cloud TTS.

Chapter 06

Outcome

95% of ASU simulation library now accessible. Deployed in Fall 2024 pilot with 50+ students. Median narration latency: 180ms. Student feedback: "Finally I can do the lab myself." Professor Mead cited the project in an NSF renewal proposal.

LLM-assisted extension for real-time simulation narration and accessibility support

Chapter 07

Reflection

Expanding Unity WebGL coverage for 3D simulations. Adding multi-language TTS support for international students. Exploring on-device LLM via TensorFlow.js for offline use in low-connectivity environments.